TL;DR

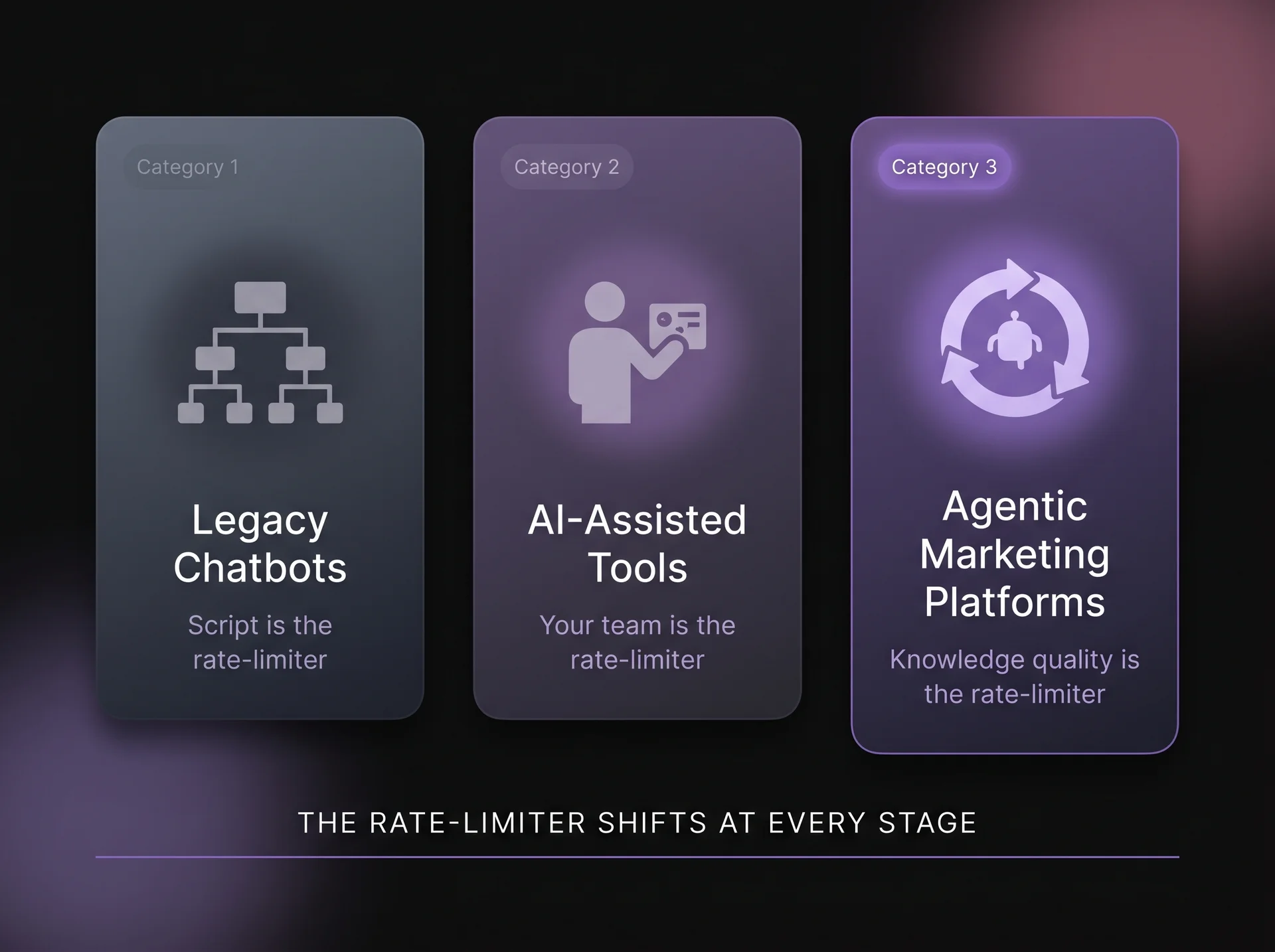

- Category 1: Scripted Execution (Legacy Chatbots): Rule-based flows. Work when buyers follow the expected path. Break the moment they go off-script.

- Category 2: Assisted Execution (AI-Assisted Tools): Make your team faster when present. Every step still requires a human to initiate it. When your buyer is active and your team is not, these tools are doing nothing.

- Category 3: Autonomous Execution (Agentic Marketing Platforms): Execute the full buyer engagement motion without waiting for your team. Engage, qualify, route, book, and log — autonomously, under governed guardrails, 24/7.

- Most B2B teams in 2026 are mid-Category 2 and believe they are further along. This map shows you where your stack actually sits — and what moving to Category 3 requires.

Most revenue leaders benchmarking their AI stack are asking an incomplete question: which tool has the best AI? The more revealing question, the one that actually determines whether your inbound motion improves, is: which tools can act without your team present, and which only help your team act faster?

But the distinction is architectural, not cosmetic, and it determines where your inbound pipeline leaks and what category of fix will close the gap. There is a consequential difference between AI that assists a human workflow and AI that runs one. The first category has matured. Drift, Qualified, HubSpot's AI features are competent, widely deployed, and increasingly commoditized. While they might have made the baseline better, they have not broken through it.

What is emerging, agentic marketing, is not an upgrade to the tools you have. It is a different architecture: systems that qualify, engage, route, and follow up without waiting for your team to be in the room.

Why B2B GTM Teams Are Stuck in the AI-Assisted Era (And Don't Know It)

The most common misconception is that deploying AI tools constitutes having an autonomous inbound motion. Most teams have built an AI-assisted team — one that moves faster when it's online, makes better decisions when it's present, and produces more content when it's at a desk. The team remains the rate-limiter. The inbound motion still waits for the team.

Why AI Adoption Hasn't Moved B2B Win Rates

96% of B2B marketers now use AI somewhere in their role — across research, content production, lead scoring, and call summaries. Three out of four GTM teams face CEO-level pressure to demonstrate that AI investment is producing results. By any measure of adoption, the tools are deployed and the workflows have changed.

Against that backdrop, the average B2B win rate still sits at 20 to 21%. Four in five opportunities don't close. That figure hasn't shifted in proportion to AI investment. The dashboards show tool usage. The pipeline numbers show something more complicated.

The tools aren't failing. Most of them work exactly as advertised. The problem is that productivity and pipeline accountability are different problems — and every tool deployed at scale is built for productivity, not pipeline.

What Does a Typical B2B AI GTM Stack Look Like in 2026?

Ask any revenue team to describe their current AI stack and the answer is usually some version of the same picture: ChatGPT for drafts and research, HubSpot AI for call summaries and scoring, Salesforce Einstein for next-best-action suggestions when a rep is already in the CRM, Jasper or Writer for content at scale.

Within its scope, it works. Reps write better emails faster, content ships more consistently, scoring surfaces the right accounts more reliably. But every stage requires a human to initiate it.

On the website, 58% of B2B companies have integrated a chatbot — most of them rule-based flows built on the Drift-era playbook that predates large language models. 52% of demand gen teams use those chatbots as their primary top-of-funnel qualification tool. The martech stack as a whole has expanded to nearly 16,000 solutions, and 57% of marketers report feeling overwhelmed by what they already own.

None of that is a criticism of the stack. These tools were deployed for good reasons and they deliver on their core promise. The issue is that the core promise of each one involves a human initiating the action.

The Rate-Limiter Problem: Why Human-Initiated AI Tools Cap Out

Every tool above waits for a human to open it. A rep opens ChatGPT and types a prompt. A marketer logs into HubSpot and pulls the summary. In each case, a human has to take the first step before the tool does anything — which means the tool's output is bounded by when and whether the human shows up.

When a high-intent buyer lands on your website at 11pm with a specific evaluation question, every tool in that stack is effectively asleep. The buyer is not. Over 98% of B2B website visitors leave without taking any action — and a meaningful portion of them were mid-evaluation buyers who arrived with real questions and left because the right response didn't happen at the moment they were ready.

That isn't a traffic problem, a content problem, or a follow-up speed problem. It's an operating model problem. And it can't be solved by adding another tool inside the same category.

The B2B AI GTM Market Map: Chatbots vs. AI Copilots vs. Agentic Marketing Platforms

The most persistent mistake in evaluating AI GTM tools is treating them as points on a sophistication spectrum. The actual difference isn't a feature. It's the answer to one question: what is the system's rate-limiter, and when does it apply?

Category 1 — Legacy Chatbots: What They Do and Where They Break

These tools run conversations on a predetermined script. They handle the paths you anticipated: route to sales, deflect to FAQ, capture a form fill. The moment a buyer asks something the tree wasn't built for, the experience breaks or bounces back to a form. The rate-limiter is the script itself.

Tools in this category:

- Drift (now Salesloft): pioneered conversational marketing; playbook-driven; bionic chatbots learn from sales conversations but remain anchored to workflows.

- Intercom (basic flows): primarily support and engagement-oriented; chat is rule-based unless upgraded.

- HubSpot Conversations (chatbot builder tier): drag-and-drop bot flows for routing and FAQ deflection.

- Freshchat: conversation routing, simple qualification prompts.

The ceiling: the buyer who follows the expected path, asks the expected question, and arrives at the expected time. Everyone else bounces.

Category 2 — AI-Assisted Tools: Why Copilots Still Need a Human in the Room

These tools make your team faster — drafting, summarizing, scoring, surfacing the next action. The work improves. But every single step still requires a human to open the tool, type the prompt, and act on the output. The rate-limiter is your team's availability, not the technology.

Tools in this category:

- HubSpot Breeze AI: lead scoring, call summaries, email drafts, content variants.

- Salesforce Einstein Copilot: next-best-action surfaces when a rep is in the CRM; does not engage buyers without human initiation.

- Jasper and Writer: brand-safe content generation at scale; human-led.

- ChatGPT Teams and Copilot for M365: research, drafts, synthesis; prompt-dependent.

- Qualified (partially here): fast account-based routing with Salesforce-native logic; optimises for speed-to-rep, not autonomous discovery. Qualified's ABM signal-matching is more sophisticated than legacy chatbots, but its conversations are still guided by predefined logic and its goal is speed-to-rep — not autonomous execution. That puts it here, not in Category 3.

The ceiling: your team is faster when present. When your buyer is active and your team is not, every one of these tools is doing nothing.

Category 3 — Agentic Marketing Platforms: What Autonomous Execution Actually Means

These platforms don't wait for a human. They engage the buyer on arrival, answer evaluation questions from governed knowledge, qualify intent inside the conversation, route to the right person, book the meeting, and log full context to CRM. The full motion completes whether your team is online or not. The rate-limiter shifts from human availability to the quality of the knowledge the agent operates from.

Tools in this category:

- Docket: AI Marketing Agent; governed knowledge foundation; qualifies, routes, books, and syncs full context to CRM autonomously.

- 1Mind (Salesloft partnership): buyer-facing AI teammates that qualify and demo; positioned as Superhumans.

- Spara: AI-led inbound qualification with autonomous conversation handling.

The critical distinction from Category 2: answers come only from approved, governed knowledge — not open-ended LLM inference. That's what makes autonomous execution safe enough to trust with a live buyer conversation.

One concrete data point that illustrates the gap between categories: across Docket deployments, the AI Marketing Agent achieves a 36% conversation start rate against approximately 13% on legacy form flows (observed across live deployments, not controlled conditions). That gap isn't a design improvement. It's what happens when the rate-limiter shifts from the form to the conversation.

Why Comparing AI Marketing Tools by Features Gets B2B Buyers the Wrong Answer

Buying decisions go wrong when the evaluation framework is borrowed from the vendor selling the thing being evaluated. When a team asks "which chatbot should I buy?" and evaluates three chatbots against each other, they'll select a chatbot — and if their underlying pipeline problem is a Category 3 problem, they'll be back at the evaluation table in twelve to eighteen months, having spent the intervening time optimising within a ceiling they couldn't see from inside it.

The question worth asking before any vendor conversation is not which tool has the strongest feature set. It's which operating model you're building toward — and whether the problem you're trying to solve lives inside the same category as the tool you're about to buy.

Most B2B GTM teams in 2026 sit in mid-Category 2: AI tools deployed, team moving faster, inbound motion still dependent on someone being online to receive and act on what arrives. That ceiling is structural. It can't be raised by adding more Category 2 tools or upgrading within Category 2. The architecture itself has to change.

The diagnostic question is not complicated: where does your inbound motion break down when your team isn't in the room?

→ Read more: Why Inbound Is Broken (And How an AI Marketing Agent Fixes It)

How to Tell If an AI Agent Is Actually Autonomous: 4 Questions to Ask in Any Demo

A scripted chatbot and a fully autonomous AI Marketing Agent can present an identical chat widget on your website — identical visual design, identical entry prompts, identical branding. The visual difference between them is zero. The architectural difference determines everything about what happens from the moment a buyer types their first message.

Four questions place any tool accurately on the map. They're worth asking in any vendor demo before the conversation moves to pricing, implementation timelines, or case studies.

- Does it act without a human initiating the session?

A tool that requires a Slack notification to be acknowledged, a queue to be accepted, or a rep to log in before the buyer interaction can begin is a Category 2 tool — regardless of how it's positioned in the vendor's marketing materials. The test isn't what the tool can do in principle. It's what happens when the buyer arrives and no human is on the screen.

- Does it answer from governed, approved knowledge — or from open-ended inference?

An agent that generates answers from general large language model reasoning can confidently misrepresent your pricing, your security posture, or your competitive positioning to a live buyer. That's not a trust concern or an edge case. It's a liability, and it compounds with scale.

- Does it qualify, route, and book as part of the same conversation?

The full motion — from first message to meeting booked — should complete without a human checkpoint inserted between qualification and conversion. If a human handoff is required before the meeting gets booked, the system is Category 2 at the conversion layer even if it handles earlier steps autonomously.

- Does it log full context to the CRM without manual input?

The rep's first call should begin with a context card: qualification status, stated pain points, the specific questions the buyer asked, and a documented next step. If the CRM record is a name and an email address, the previous three steps didn't complete in any meaningful sense.

If the answer to any of those questions is no, the tool belongs in Category 2. Most platforms that call themselves agentic cannot demonstrate all four of these in a live demo. That's the test.

Governed AI Marketing: What Enterprise Buyers Need to Verify Before Deploying an Autonomous Agent

Every serious conversation about deploying autonomous AI in a customer-facing context eventually arrives at the same question: what if it says something wrong? It's a fair question, and it deserves a better answer than most vendors provide — which is usually a combination of reassurance and a pivot back to the demo.

An agent operating from open-ended inference can hallucinate pricing it's never been given. It can misstate a security certification the product doesn't hold. It can answer a question about your competitive positioning in a way that creates legal exposure. These aren't theoretical failure modes. They're the operational reality of deploying a large language model without a governance layer over what it's allowed to say and which sources it's allowed to draw from.

The capability question — "can this agent have a conversation?" — is separate from the risk question — "what happens when the conversation goes somewhere the agent wasn't prepared for?" Most buyers ask only the first one.

What makes a Category 3 platform enterprise-safe isn't the autonomy. It's the governance architecture the autonomy operates inside. In practice, that architecture has four components that should be verifiable in any vendor demo — not promised in a sales deck.

- Guardrails define the scope of what the agent can and cannot say, and the topics it's required to escalate to a human rather than answer on its own authority.

- Escalation triggers create real-time alerts when a conversation crosses a threshold: a pricing commitment outside a defined range, a legal question the agent isn't authorised to answer, a buyer flagged in the CRM as requiring executive attention.

- Approved knowledge means the agent draws answers only from versioned, permissioned sources — product documentation, security materials, approved competitive responses — not from open-ended reasoning.

- An audit trail captures not just what the agent said but which source it drew from, making every answer reviewable and every deployment decision defensible.

When Demandbase connected their governed knowledge foundation to Docket's AI Marketing Agent, 93% of seller queries were automated and full deployment was completed in under two weeks. The speed was possible because the knowledge architecture was governed before it was handed to the agent.

The governance question isn't a reason to delay Category 3 adoption. It's the thing you resolve before you deploy — not the thing you discover after.

→ Read more: How to Evaluate AI Marketing Agent Vendors: A 5-Question Demo Script

How to Diagnose Which AI Marketing Category Your GTM Stack Is In (3 Questions)

Most teams overestimate how far along the maturity curve they've moved — and the overestimation is a rational response to how Category 2 and Category 3 tools are marketed. A tool that calls itself an AI agent and presents a conversational interface reads as Category 3 until you apply the four questions above.

Three questions place your current stack on the map without requiring a vendor conversation at all.

- When a buyer lands on your website outside business hours and asks a real evaluation question, what happens?

If a form appears, a notification queues, and someone follows up the next morning — your inbound motion is Category 1 at the point of buyer contact, regardless of what AI your team uses during the day.

- Does your team need to act before buyer engagement can begin?

If a notification needs to be accepted, a queue cleared, or a rep logged in before anything happens — human presence is still the architectural rate-limiter. That's Category 2.

- When a qualified lead arrives in your CRM, what does the record contain?

A name, an email, and a company is a Category 1 handoff. Qualification status, stated pain points, specific questions asked, and a documented next step is a Category 3 handoff. The difference determines whether the first call recaps what should already be known — or advances a deal from an informed position.

→ Self-diagnose further: The AI Marketing Maturity Model — Which Stage Are You In?

Category 2 in practice looks like a team that's more productive than it was two years ago. Reps are better informed, content ships faster, the right accounts get the right attention more consistently. The inbound layer still leaks at the moment of highest buyer intent — because every action at that layer still waits for a human, and the buyer doesn't wait.

Moving to Category 3 is an architecture decision, not a tool upgrade. The agent executes the full engagement motion: it answers the buyer's question, qualifies their intent within the same conversation, routes to the right person, books the meeting, and delivers a fully populated context record to the rep before the first call happens. The human sets the objectives, defines the guardrails, and reviews outcomes. The inbound motion operates whether the team is online or not.

"In just two weeks, Docket's AI Marketing Agent generated 23 meetings — over five times our baseline conversion rate. What surprised us most? 77% of those meetings were booked outside business hours. That's a pipeline we simply would have missed." — VP Marketing, B2B Marketing Analytics Company

The pipeline they'd been missing wasn't a targeting or budget problem. It was a timing problem: buyers arriving at hours the previous architecture was never built to serve.

Chatbots vs. AI Marketing Agents: What You're Actually Choosing Between

The market map doesn't tell you what to buy. It tells you what you're actually choosing between. If your stack is Category 2 and your pipeline problem is a Category 3 problem, the gap isn't going to close with another AI-assisted tool.

If you want to see what Category 3 looks like in a live deployment rather than a prepared demo, the Docket AI Marketing Agent is running on docket.io. Ask it a pricing question. Ask it a security question. Ask it what makes Docket different from Qualified. Those are the conversations your current chatbot ends. That's the test.

→ See it live: Talk to Docket's AI Marketing Agent

→ Read next: What Is an AI Marketing Agent? The Buyer-Facing Definition