How to Evaluate AI Marketing Agent Vendors: A 5-Question Demo Script

.png)

.png)

TL;DR

A thread on Reddit surfaced that most vendors won't say out loud: "Create a loop with an LLM that calls a tool, and technically, you have yourself an agent." The replies didn't push back.

That's the problem sitting at the center of every vendor demo you're about to walk into.

The AI agent category is at a specific, awkward moment. Buyers are educated, budgets are approved, shortlists are ready — and the evaluation infrastructure hasn't caught up. Legacy tools have been relabeled. Scripted decision trees have been repackaged. Every vendor has had months to rehearse the same walkthrough before buyers had any real framework to push back with. The demos look rigorous. They're not.

The gap isn't intent, it's tooling. Below are five questions designed for live demos: what to ask to see in real time, and the signals that tell you whether the architecture can actually do what's being claimed.

Use it as a counter-script. Run it in every vendor session. The answers — and the hesitations — will tell you everything.

The happy path is scripted and honestly, it's scripted well. Pre-loaded questions, clean routing, a meeting booked on cue at the end. What you're watching is theater rehearsed in staging, not a live system dealing with a buyer who goes off-script at 11pm asking about an edge case in your security docs. The gap between that polished demo and what actually runs in production is where most buyers get surprised — usually after they've already signed.

Most RFPs for AI agents end up written in the vendor's own language — "agentic," "autonomous," "governed" — terms that sound specific but mean different things depending on who's using them. When you borrow a vendor's vocabulary to evaluate that vendor, you've handed them the scoring rubric before the test starts. The only way around it is to run tests that bypass the vocabulary entirely, which is what the questions below are designed to do.

Ask these questions in this order. The answers — and the hesitations — tell you everything.

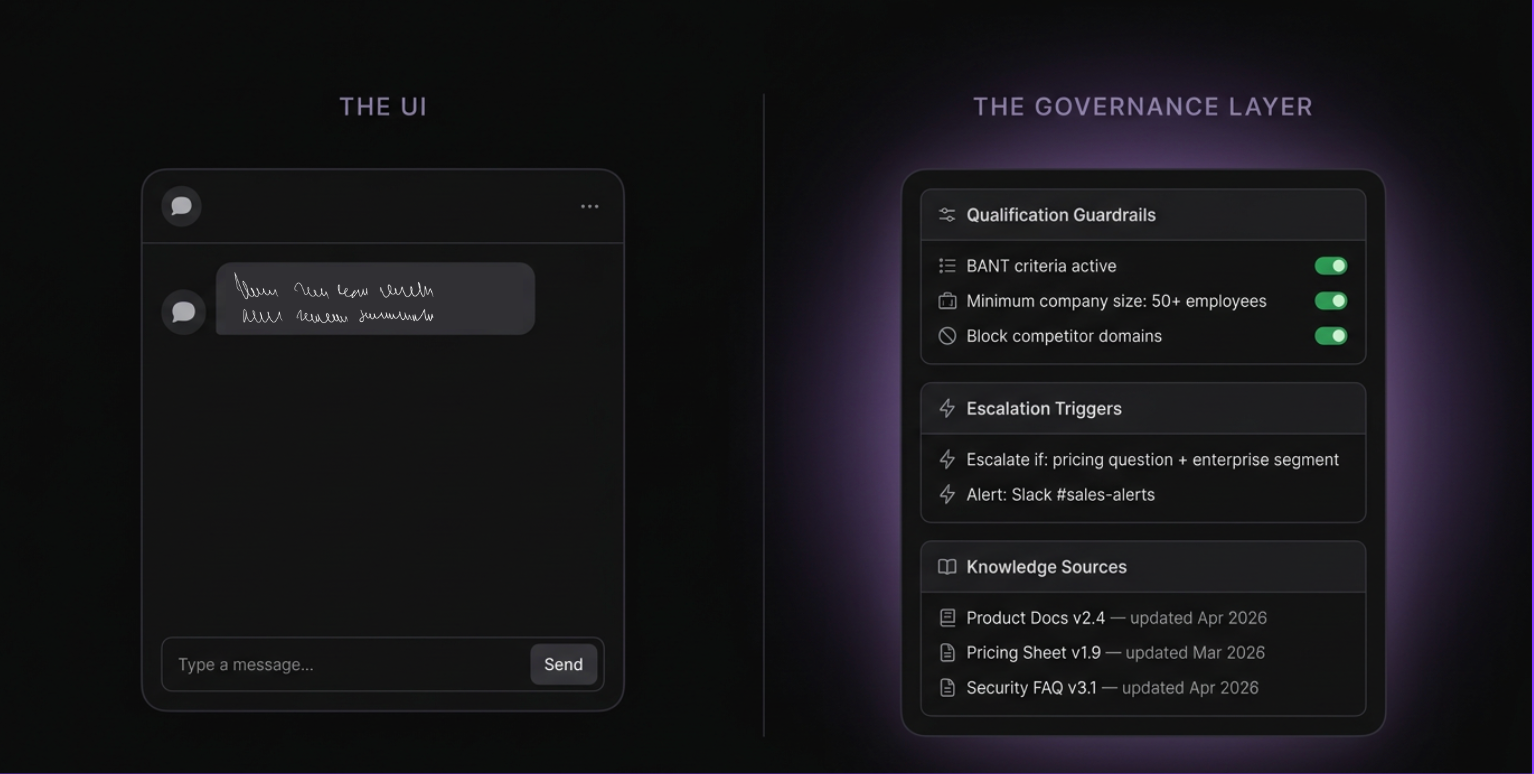

What to ask: "Can you open the configuration panel — not the chat widget — and show me how qualification guardrails, escalation triggers, and approved knowledge controls are set?"

What good looks like: The vendor opens a real admin layer. You can see defined qualification logic, escalation rules that trigger human alerts, and a knowledge source list with version control. The guardrails are inspectable, not black-boxed.

Red flag: They return to the demo script. They show you a settings menu with toggles. They say "our team configures that for you." If a human configures the guardrails and the buyer can't inspect them, that's not governance — it's a black box.

Here's the practical implication: if a vendor can't open that panel in a live demo, they haven't built it. Indexing documents is the straightforward part. Building a governed AI marketing layer with auditability, versioning, partitioned access, and real escalation logic is a different engineering problem entirely. The inability to show you that layer in 10 minutes is your answer.

What to do: Don't use their sample questions. Ask something genuinely outside the FAQ — a niche integration question, a pricing edge case, a security scenario. Watch in real time.

What good looks like: The agent either answers from approved knowledge, escalates correctly with context, or explicitly flags it cannot answer and routes to a human — without hallucinating a response.

Red flag: It produces a confident, plausible-sounding answer that isn't grounded in your actual product. That's the hallucination risk that B2B procurement teams flag most often — and the reason preventing AI hallucination in B2B deployments isn't just a trust concern, it's a liability one. An LLM wrapper with no knowledge governance will confidently misrepresent your pricing, security posture, or competitive position to a live buyer. This question surfaces it.

Read more: Wrappers vs. Agents: Why AI Marketing Needs a Knowledge Lake

Southwest Solutions saw an 83.9% meaningful conversation rate across their FillingSupplies brand, with buyers averaging 4.6 minutes per session. That depth of engagement doesn't happen with a scripted flow that breaks the moment a buyer goes off-script. It happens when the agent can handle what gets thrown at it — from approved knowledge, not open-ended inference.

What to ask: "Can you pull up a completed conversation from your demo environment and show me the corresponding record in Salesforce or HubSpot — the qualification status, intent signals, and next step?"

What good looks like: They pull the CRM record in under five minutes. It contains more than contact info: qualification criteria met or not met, conversation summary, routing decision, meeting booked or pending. The rep shouldn't start from a blank contact. They should start from a context card.

Red flag: They show you the chat transcript but not the CRM record. Or the CRM record is a contact with a name and email. Most "agents" chat — they don't close the motion. If the CRM sync isn't demonstrable in ten minutes, it isn't what they're selling.

Read more: How AI Agents Like Docket Cut Sales Cycles by 10–30%

IBM's Institute for Business Value surveyed 1,222 Salesforce customers in 2025 and found only 26% of executives report their customer data primarily lives in Salesforce. For the other 74%, critical context is trapped in silos — effectively limiting what any AI agent can actually see. That's the record your rep is starting from. Question 3 is really asking whether the agent you're evaluating changes that, or just adds another name to a blank contact.

What to ask: Define "live" explicitly before they answer. "By live, I mean my knowledge is loaded, my qualification rules are active, and real buyers are engaging, not a sandbox. What does your team need from mine in weeks 1 and 2, and what's the realistic date?"

What good looks like: The vendor gives you a week by week answer without hesitation. Week 1 is CRM connection, knowledge upload, and guardrail configuration. Week 2 is QA and soft launch. By the end of week two, real buyers are engaging on your site, not a sandbox environment they control.

Red flag: Vague answers ("it depends on your setup"), references to a professional services team, or timelines that start at six weeks. Vague answers here always mean longer timelines and longer timelines mean more high-intent buyers leaking while you implement.

Demandbase automated 93% of their seller queries and went live in under two weeks. Inflection.io was using the agent on live calls within days of onboarding, their CEO specifically noted they'd been burned by tools that promised fast implementation before, so the speed was notable. The Swarm went from kickoff to live in under three weeks. The timeline objection, in almost every case, is really an objection about the last platform someone tried to implement. It's not a category-wide constraint.

What to ask: "Walk me through what happens when a buyer lands on the website at 11pm. Is the agent already running, or does someone on my team need to be active, accept a queue, or approve a routing for the engagement to start?"

What good looks like: The agent is always on. No human trigger. The buyer lands, the agent engages, qualifies, routes, books, and logs, without a human in the loop at each step.

Red flag: Any version of "your team gets notified and can jump in." That's a human-in-the-loop architecture — Stage 2 with a chat interface. (Stage 2: AI assists a human who still initiates every action. Stage 3: the agent initiates and executes the engagement autonomously, within defined guardrails.) This isn't a gotcha question. It's an architecture question. The vendor's answer tells you whether you're buying Stage 2 infrastructure or Stage 3 execution.

77% of Factors.ai's high-value conversations happened outside business hours. That's not a traffic insight. It's an architectural insight. The pipeline wasn't missing. The human was. An agent that needs a team member to trigger, accept, or approve an engagement before it starts isn't always-on. It's on-call. Those are two completely different systems.

Run each vendor through all five questions. Grade before you leave the room.

These questions test capability architecture, not fit. They're designed to expose what the system actually does, not whether it's right for your specific use case or pipeline motion.

ICP fit, pricing model, integration depth, what the agent can and can't say about your product — that's a separate conversation. Run this counter-script first and fit questions after, because you can't make a good fit judgment about a system whose architecture you don't yet understand.

The most useful thing you can do before your next vendor demo is run this framework on the Docket AI Marketing Agent first, while you still have time to adjust your shortlist.

Docket's AI Marketing Agent is live on docket.io. It answers from approved product knowledge, handles questions the demo script wouldn't include, routes and books without a human in the loop, and syncs full context to CRM. Try it with something genuinely off-script. See what comes back.

If it holds up, you have a real benchmark. If it doesn't, that's useful information too.