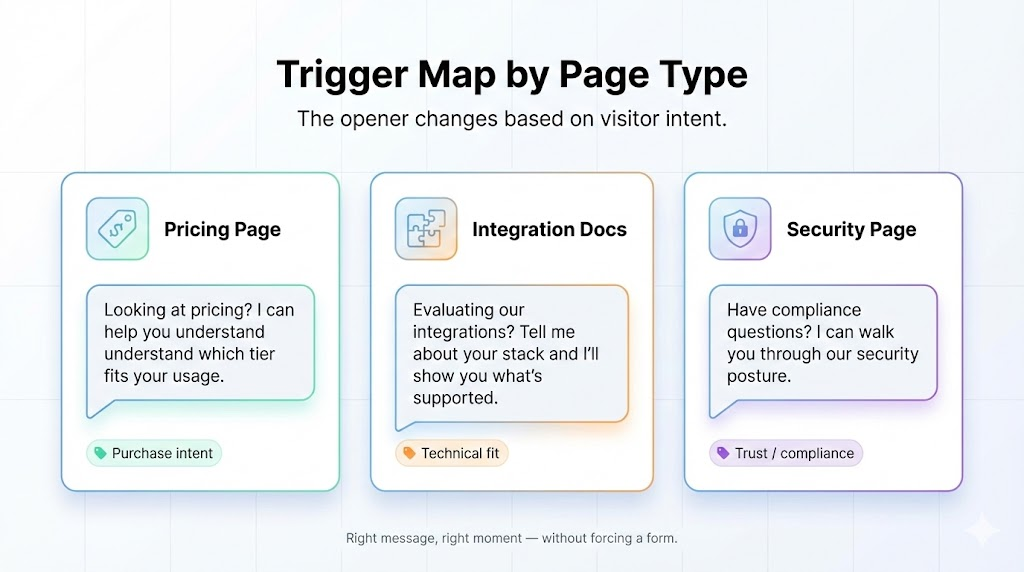

It's late evening. A buyer from a target account is on your pricing page, comparing you side-by-side with a competitor. They're trying to understand deployment complexity, security posture, and whether you fit their timeline.

No rep is involved yet. Your website is the only interface.

That's the modern B2B buying moment. And it's the exact moment where most chatbots quietly fail. Because most chatbots were built to route conversations, not support evaluation. They decide where a conversation goes, not whether it should progress toward a buying decision. In 2026, that difference matters.

It matters because these two tools belong to different operating eras. Chatbots are an Assisted Marketing tool — they help humans route faster, but humans remain the execution layer for every meaningful exchange. AI agents are the execution layer of Agentic Marketing — a new operating model where agents handle discovery and qualification autonomously, under human governance, and humans step in where judgment actually matters.

Why Chatbots vs AI Agents Is Suddenly an Urgent Question

Until recently, the "chatbot vs. agent" question didn't exist. Chatbots were the only option. But LLM-based systems have made it possible to build agents that reason through scenarios, not just route visitors to forms or calendars. That shifts what's possible and what buyers now expect from website conversations. This is the Assisted to Agentic shift playing out at the product level. Assisted tools routed. Agentic systems execute. The vocabulary changed last. The buyer behavior changed first.

B2B buying is long, non-linear, and multi-stakeholder. Gartner research shows the average B2B buying group includes 6-10 decision-makers, and buyers complete the majority of their research before engaging sales. Your website isn't just a lead capture surface anymore. It's where evaluation happens.

Buyers return multiple times. They ask "risk questions" early: integrations, security posture, compliance, pricing logic, deployment constraints, internal approvals.

When your website can't handle those evaluation-grade questions in the moment, the failure pattern is predictable:

- High-intent visitors bounce

- Pipeline gets noisier

- SDRs restart discovery from scratch

- Buyers repeat themselves across every interaction

The question isn't whether to have website conversations. It's whether those conversations can carry the weight of real evaluation.

B2B Chatbots: What They Are and What They’re Good At

A B2B chatbot is a conversational interface that:

- Engages visitors

- Answers predefined questions

- Captures lead information

- Routes to sales or support

Its primary job is orchestration, not selling or solutioning.

When chatbots work well

- Routing decisions ("Are you looking for sales or support?")

- Basic qualification gates (email, company name, role)

- FAQ deflection

- Meeting scheduling

Chatbots are also "safer" by design. They're bound. Limited freedom means fewer surprises. For high-volume, low-complexity interactions, that predictability is valuable.

The limitation

Chatbots determine where a conversation should go, not whether it should progress toward a buying decision. That's a significant gap in B2B, where evaluation questions are rarely single-intent. A buyer asking about "pricing" might actually be asking about scalability, commitment flexibility, or competitive positioning…all at once.

AI Agents: What They Are and How They Differ from Chatbots

An AI agent doesn't just answer questions. It runs a lightweight discovery process. It clarifies constraints, maps needs to capabilities, surfaces tradeoffs, and recommends a path forward.

Think of it as an early-stage AE or SE embedded in your website.

What this looks like in practice

A buyer asks: "We're a 150-person SaaS company with a PLG motion, EU customers, and a tiny RevOps team. Can you handle this without a 6-month implementation?"

That's not FAQ territory. That's scenario evaluation. A chatbot deflects it. An agent engages it.

A good agent:

- Asks clarifying questions to understand constraints

- Reasons across multiple factors (timeline, team size, compliance needs)

- Recommends the best-fit path or package

- Hands off to sales with context intact

This is an architectural upgrade, not a UI upgrade.

The Core Difference: Chatbots Route, AI Agents Reason

Chatbots route conversations.

Traditional chatbots optimize for control and predictability. They reduce variance by design: fixed flows, limited intent buckets, deterministic outcomes. This tradeoff works for routing. It fails for evaluation.

Where routing breaks down:

- Pricing tradeoffs ("What happens if we need to scale mid-contract?")

- Objections ("We tried something similar and it failed—why is this different?")

- Competitive comparisons ("How do you compare to [Competitor X] on security?")

- Timeline pressure ("We need to be live in 6 weeks—is that realistic?")

- Internal stakeholder concerns ("Our IT team will ask about SOC 2—what should I tell them?")

These are exactly the moments where buyer intent is highest. And exactly where chatbots deflect to forms or calendars.

Agents reason through evaluation.

Instead of deflecting complex questions, agents move the buyer closer to a decision:

- Turn messy, multi-part questions into crisp clarifying questions

- Map stated needs to specific capabilities

- Make tradeoffs explicit ("You mentioned timeline is critical—here's what that means for implementation scope")

- Recommend next steps with context intact

This matches what buyers now expect: answers, not navigation. They don't want to dig through pages or fill out forms to learn the basics.

.png)

Chatbot capability plateaus early. Agent capability extends across complexity ranges.

In operating model terms: chatbots belong to the Assisted Marketing era — the tool moves the human faster, but the human is still the bottleneck at every meaningful step. Agents belong to the Agentic Marketing era — the agent executes the evaluation conversation autonomously, within guardrails the human defines, and escalates when judgment is required. That's not a feature difference. It's a different model for how marketing work gets done.

How AI Agents Work: The Architecture That Enables Reasoning

Agent reasoning is grounded in three layers:

- Knowledge base (structured, indexed): Product docs, pricing logic, competitive positioning—indexed so the agent can retrieve accurate information, not hallucinate

- Product schema (capabilities and integrations as connected facts): Not just "we integrate with Salesforce" but "we integrate with Salesforce, here's what syncs, here's what doesn't, here's how setup works"

- Visitor scenario (role, stack, constraints, urgency): Context captured during the conversation that shapes recommendations

This grounding is what separates useful agents from chatbots with better language models.

How AI Agents Work: The Architecture That Enables Reasoning

Here's the part your buyers and your security team will care about: agents increase blast radius if you don't constrain them.

These are specific risks you should assume will happen in production:

- Prompt injection: Users attempting to override instructions or extract information

- Sensitive information disclosure: Agent reveals pricing, roadmap, or customer details it shouldn't

- Excessive agency: Agent takes actions (CRM writes, meeting bookings) without appropriate checks

So your "agent vs. chatbot" decision isn't only about capability. It's about capability + control.

Why Governance Isn’t Optional for AI Agents

This is what human oversight in Agentic Marketing actually looks like in production. Not a promise in a pitch deck. An architecture — with defined guardrails, escalation rules, compliance enforcement, and human review built into how the agent operates. Every agentic deployment should be able to answer for each of the following:

Agents can do more than chatbots, which means they can break more, too. Without guardrails, you're exposed to prompt injection, sensitive data disclosure, and uncontrolled CRM writes. Vendors who treat governance as an afterthought will cost you pipeline, trust, or both.

Governance Checklist: Questions to Ask Before You Deploy AI Agents

If you're evaluating agents, require evidence of:

What “Good” Looks Like in AI Agents: Docket as an Example

Docket's guardrails are architectural, not "please behave" prompts.

Their custom cognitive architecture splits the system into:

- Responder: The buyer-facing conversation layer

- Thinker: The deliberate reasoning and execution layer

The agent chatting with your buyer isn't the same system touching your CRM. Actions flow through a shared tool registry, which means the agent can only perform pre-approved operations (scoped actions, least privilege). You can log the plan and tool calls for audit and debugging.

That separation is the control point: risky requests can be clarified, refused, or escalated before anything gets written to systems of record.

How Chatbots vs AI Agents Play Out in Inbound Qualification

Same inbound lead. Two different outcomes.

A VP of Revenue at a 300-person SaaS company lands on your pricing page at 9pm. They want to know if your product handles multi-region billing, integrates with their existing Salesforce setup, and whether your enterprise tier includes SSO.

With a chatbot:

The bot detects "pricing page" intent and serves a pre-built response about plans. It can't answer the Salesforce question — that's not in the script. It can't address SSO — that's not mapped to this flow. It offers to connect them with a rep. The visitor closes the tab. Your CRM logs a "chatbot interaction." No pipeline created.

With an AI Marketing Agent:

The agent understands the full scenario — multi-region billing, Salesforce integration, SSO on enterprise. It pulls from your product knowledge base, your integration docs, and your pricing structure. It answers all three questions accurately, in one conversation. It qualifies the lead, confirms fit, and offers to book time with the right AE — with full context already passed to CRM.

Same visitor. Same intent. Entirely different outcome. That's the operating model gap between Assisted Marketing and Agentic Marketing.

Chatbots vs AI Agents: Side by Side Comparison

The gap isn't features. It's the operating model.

How to Decide Between a Chatbot and an AI Agent

In operating model terms: chatbots are the right choice for Assisted Marketing workflows — high volume, low complexity, humans still driving every meaningful step. Agents are the right choice when you're ready for Agentic Marketing — where the agent owns the evaluation conversation, and humans govern rather than operate.

Pick a chatbot if:

- Your primary job is routing ("sales vs. support," "book a meeting")

- Questions are mostly FAQ-level

- Wrong answers are low-risk

- You need maximum predictability

- You don't have bandwidth for knowledge governance

Pick an agent if:

- Buyers ask scenario questions about stack, security, compliance, deployment, or pricing logic

- You need real qualification, not just email capture

- You care about CRM integrity (structured handoffs, clean routing)

- You want your website to behave like an answer engine

- You're ready to implement controls aligned with OWASP LLM risks

Implementation Traps: Mistakes with Chatbots and AI Agents That Cost You Pipeline

If you deploy a chatbot:

- Avoid the bot wall: Don't gate with email before delivering value. Keep required fields minimal. Provide escape hatches (talk to human, send summary, view docs).

- Don't over-branch: Too many decision trees create dead ends. Buyers get stuck or abandon.

- Test with real buyer questions: FAQ coverage isn't enough. Use actual questions from sales calls and see where the bot breaks.

If you deploy an agent:

- Treat it like a production system: Grounding, permissions, and logging are mandatory from day one, not "phase two."

- Start with constrained scope: One product line, one ICP segment. Expand after you've validated accuracy.

- Build a feedback loop: Flag bad answers, review weekly, retrain. Agents improve with attention; they degrade with neglect.

- Set escalation rules: Uncertainty should trigger clarifying questions or human routing, not confident-sounding hallucinations.

Key Questions to Ask Chatbot and AI Agent Vendors

Key Questions to Ask Chatbot and AI Agent Vendors

Use these to separate genuinely agentic platforms from relabeled chatbots:

- Where does your knowledge come from?

A true agent pulls from a live, unified knowledge base — product docs, Gong calls, Slack threads, CRM data. If the answer is "we have a content editor where you build scripts," it's a chatbot. - Can it handle a multi-part technical question it hasn't seen before?

Ask them to demo it live with a question not on the pricing page. Chatbots deflect. Agents reason and answer. - What happens when the agent doesn't know something?

The answer should describe a governance model — escalation rules, human handoff with full context, defined topic boundaries. If the answer is "it tries its best," that's a red flag. - Who sets the guardrails, and how are they enforced?

Agentic Marketing requires human oversight by design. The vendor should be able to explain exactly how humans define objectives, content boundaries, and escalation thresholds — and how those are enforced at runtime. - How long does deployment take?

Months usually means avatar production or heavy script-building (Assisted era). Days or weeks usually means a knowledge-grounded agent with white-glove onboarding (Agentic era). - What does your governance and compliance architecture look like?

For enterprise buyers: SOC2, GDPR, ISO 27001, data residency, and audit logging should all be answerable without hesitation.

Bottom Line: When a Chatbot Is Enough and When You Need an AI Agent

The real question isn't chatbot or agent. It's which operating model your website is running.

Assisted Marketing loses the evaluation moment — the buyer arrives with a real question, hits a routing widget, and leaves. Agentic Marketing owns it — the agent executes the conversation the buyer was ready to have, autonomously, accurately, and on their terms.

The tool you choose is a downstream decision. The operating model is the upstream one.