The 2026 AI Procurement Checklist: Vetting Your AI Agent for Security, Privacy, and Trust

It's Tuesday afternoon. The contract has been sitting with your legal team for three weeks. Not because the product failed evaluation. Because IT sent back a security questionnaire the vendor can't fully answer, and now your deal is stalled at the finish line.

This is how most AI agent deals die in 2026. Not on features. On procurement.

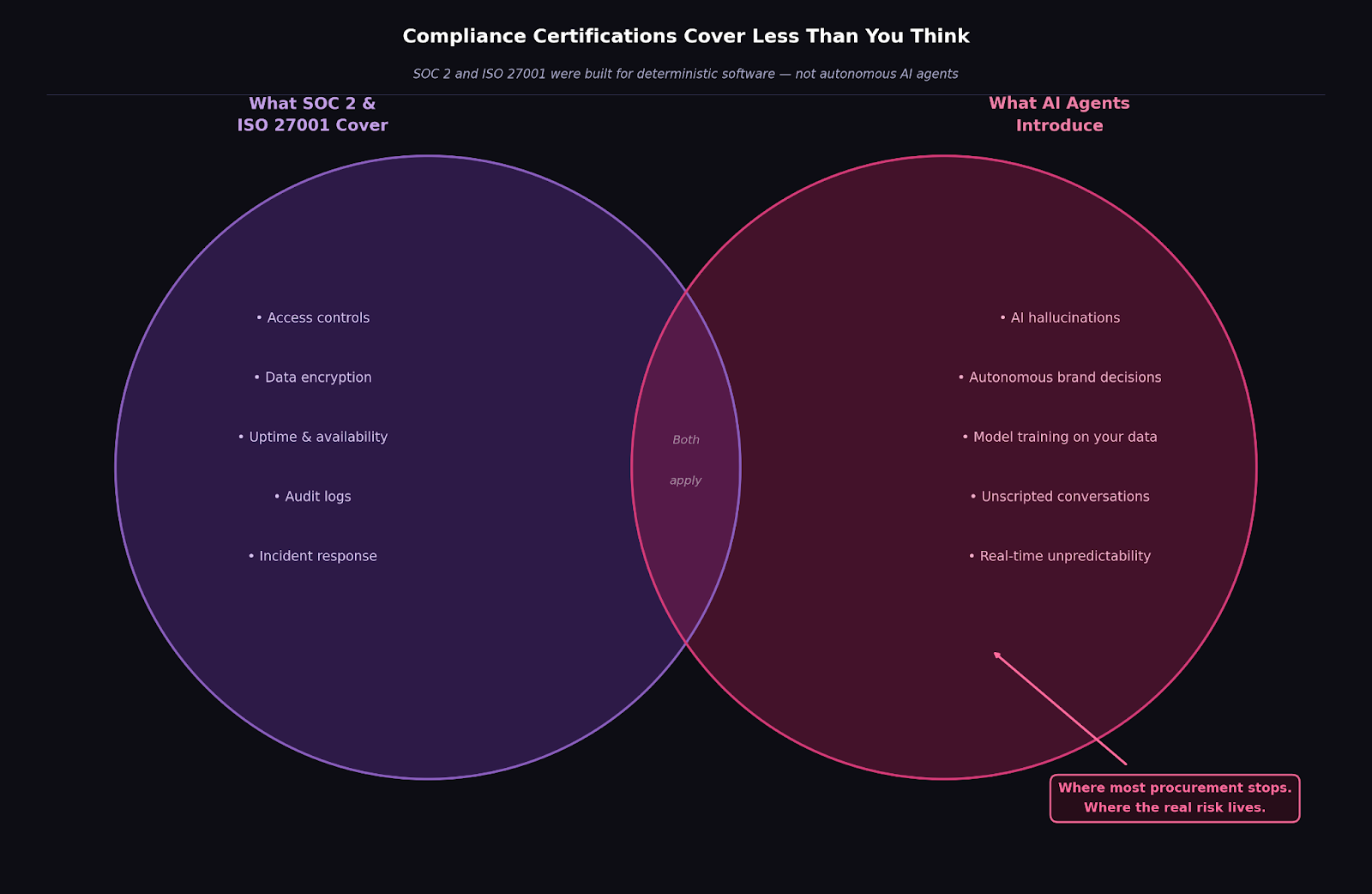

The problem isn't your legal team being difficult. The problem is that your evaluation process was built for deterministic software, not for AI agents that generate novel outputs, hold unscripted conversations with your prospects, and make autonomous decisions about your brand in real time. The standard vendor security form wasn't designed for any of that.

"Teams check the SOC 2 box and think they're done," says Arjun Pillai, CEO of Docket. "They never ask the hard follow-up questions about the architecture of the AI."

The data backs him up. 72% of S&P 500 companies now flag AI as a material risk in their public disclosures, up from just 12% in 2023 (The Conference Board, October 2025). And 97% of organizations that suffered an AI-related breach admitted they lacked adequate AI access controls (CygenIQ, February 2026).

Yet 74% of businesses say they are concerned about AI privacy risks, while still buying AI tools without a procurement process designed for them (Yahoo Finance, March 2025).

This checklist is designed to fix that. It goes beyond compliance certificates to probe the architecture, the data policies, and the guardrails that separate trustworthy AI vendors from risky

Share this with your IT lead or legal team before the security review call. It gives them specific, architecture-level questions to ask, not just a certificate request. A vendor who hesitates on any question here is telling you something. A vendor who answers every one without hesitation has built the right way.

These certifications are the minimum. With 80% of mid-market SaaS RFPs now demanding SOC 2 Type II, vendors without it are disqualified before the conversation starts (Jeeva AI, July 2025).

A public-facing trust center is a strong signal. A lack of one should end the evaluation.

The EU AI Act is in force. Any vendor serving European customers must be able to classify their AI system under its risk-based framework and demonstrate compliance with transparency and human oversight requirements (CloudEagle.ai, December 2025).

This is the most important section. The wrong answers here don't just create compliance exposure. They can leak your competitive positioning to every other customer on a vendor's platform.

The only acceptable answer is no. If a vendor feeds your conversation transcripts, customer intelligence, or competitive positioning into shared models, your proprietary knowledge effectively becomes a training contribution to their entire customer base. We never train on customer data. Every customer's knowledge stays exactly where it belongs: with them.

This is the architectural question most security reviews miss entirely. Isolated means your data cannot be accessed by, cross-referenced with, or co-mingled with any other organization on the platform. Docket's Sales Knowledge LakeTM is unique to each customer — connecting to your docs, your Slack, your CRM — and ring-fenced from every other deployment.

Under GDPR's Right to Erasure, you can request complete deletion of your data at any time. The vendor must confirm they can execute this permanently — not just flag the data as inactive. If they can't give you a clear answer with a timeline, that's your answer.

Expect AES-256 encryption for stored data and full encryption for anything moving between systems. Anything less is below industry standard for a platform handling live prospect conversations.

Most security questionnaires stop at certifications. This section is where the real evaluation happens.

Traditional security frameworks were never designed to evaluate whether an AI can fabricate a product feature, go off-brand, or confidently mislead a prospect at 2 AM. That's a different risk category entirely — and it has no SOC 2 checkbox.

Any serious answer here involves grounding: the AI should only respond from a verified, approved knowledge base — not from general training data scraped from the internet. When the knowledge base doesn't have the answer, the AI should say so and hand off to a human rather than improvise. That's an architectural decision, not a settings toggle.

Docket's AI Marketing Agent is built exactly this way. If a prospect asks something outside our approved knowledge, says "I don't know, but let me connect you with someone who does." Not a guess. A handoff. The system is designed to fail gracefully — because failing gracefully beats failing confidently.

An AI trained on last quarter's product documentation is quietly spreading misinformation today. Ask how often the system syncs with your source materials, what triggers an update, and how stale knowledge gets flagged. Docket runs nightly recrawls of each customer's website and syncs with over 100 data sources to keep Aura accurate and current.

This should be non-negotiable. Full conversation logs for every interaction — not summaries, not aggregates. If something goes wrong, you need the transcript. If something goes right, you need to know why. Docket provides complete audit trails for every conversation, by default.

This section is less dramatic than Section 3 but equally important. It separates vendors who have thought seriously about enterprise deployment from those who bolt on security features as customers ask for them.

The platform must support role-based access control (RBAC) and the principle of least privilege. Ask whether SSO (Single Sign-On) is supported, and whether it is enforced for enterprise accounts or left optional.

The platform should let you control access by role, so not everyone in your company can see every conversation or edit every knowledge source. Ask whether your organization can log in through your existing identity provider (Okta, Google Workspace, or similar) rather than managing a separate set of credentials. This matters more once you're live and onboarding teams across departments.

Your data security is only as strong as the least secure system it passes through. Any vendor worth evaluating should hand you a current sub-processor list and explain how they review those vendors. A shrug or a delay here is a red flag.

Annual is the floor. Quarterly — or after major product updates — is what mature security programs look like. Ask whether they share results or attestations with customers. Not the full report, but evidence it happened and what was found.

An AI agent having live conversations with your prospects is not a background tool. It's front-line infrastructure. Ask for their uptime commitment, how quickly they resolve incidents, and how they notify you when something breaks. A vendor who handles this question vaguely hasn't thought seriously about production.

Ask for their uptime SLA, their mean time to resolution (MTTR) for incidents, and how they notify customers when something goes wrong.

There should be a documented process: how vulnerabilities get identified, how they get prioritized, how patches get deployed, and how customers get notified. If this only exists informally, that's worth knowing before you sign.

Getting procurement right does more than reduce risk. It clears the path for real outcomes.

When Demandbase evaluated AI marketing agents, security and compliance were not obstacles. They were prerequisites.

"We needed an AI agent that could represent our brand accurately and handle sensitive conversations with enterprise prospects," says Sara Ting, VP of Marketing at Demandbase. "Docket's architecture, the isolated knowledge base, the hallucination guardrails, the full audit trails, gave us the confidence to deploy. The results followed: a 15% increase in qualified pipeline within the first quarter."

Other Docket customers have seen similar results. A customer reported an 11% boost in website engagement after deploying Aura. Another saw a 6% decrease in customer acquisition cost.

These outcomes are only possible when the security foundation is solid. One hallucination incident or data exposure does not just damage your brand. It destroys the entire ROI model.

The vendors who can answer every question on this checklist without hesitation have built the architecture correctly. The ones who can't are telling you exactly where your risk is.

If a vendor hesitates, asks for time to check internally, or gives a vague answer on data isolation or model training, you have your answer.

Ready to ask these questions to Docket? Every answer is already on our trust center, and our team is available to walk your IT and legal stakeholders through the architecture before your next review call.