5 Ways to Improve Pipeline Quality for B2B Teams in 2026

Something shifted in B2B go-to-market over the last 18 months. Teams got faster, dramatically so. Forrester found that in less than two years, 89% of B2B buyers adopted generative AI, naming it one of the top sources of self-guided information in every phase of their buying process. AI tools shortened outreach cycles, automated lead scoring, and filled calendars.Win rates, however, have not followed. According to HubSpot's 2024 Sales Trends Report, the average B2B win rate sits at 20 to 21%, meaning roughly four in five opportunities do not close. Sales cycles have not shortened in proportion to the effort invested, and sales engineering teams still spend a disproportionate share of their time on deals that were never a real fit.The pattern is consistent enough to name: revenue teams have automated volume without governing quality. The tools got better at doing the work. They did not get better at deciding which work was worth doing.

This post examines why pipeline quality has become the defining go-to-market challenge of 2026 and outlines five changes B2B revenue teams can make right now, without adding headcount, rebuilding the stack, or killing MQL programs overnight.

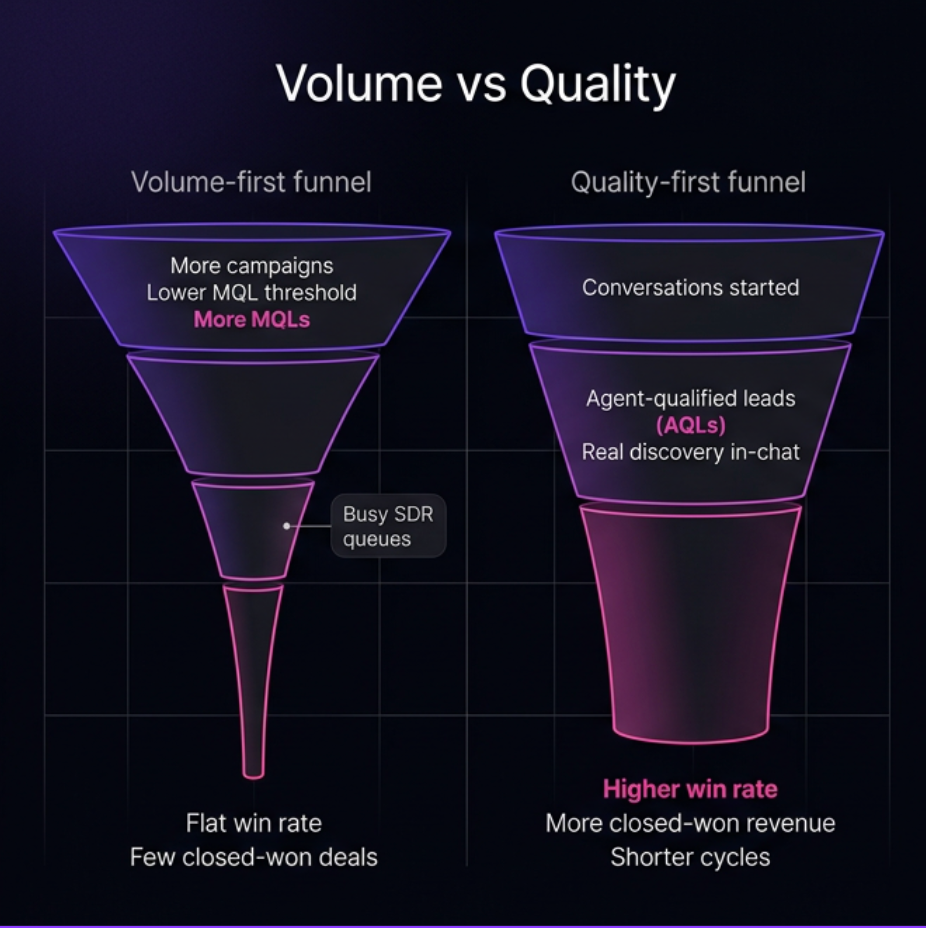

Most teams read flat win rates as a volume problem. If only there were more leads at the top of the funnel, more of them would make it through. So the instinct is to push harder on campaigns, lower the MQL threshold, or buy more intent data.

It is an understandable diagnosis. It is also incomplete.

The problem is not that too few leads are entering the funnel. The problem is that many of the leads entering the funnel were never qualified to begin with, and the AI tools most teams deployed made that worse, not better.

Here is what most teams still get wrong about AI productivity: they conflate speed with quality. Copilots and AI-assisted tools make humans faster at the existing motion, with better drafts, faster lead scoring, and smarter suggestions. But every one of those tools waits for a human to open a tab and act. When a high-intent buyer lands on the website at 11pm and asks a real evaluation question, those AI tools are asleep. The buyer is not.

The compounding problem runs deeper. Buyers in 2026 arrive at the website having already used AI to research vendors, compare options, and build a shortlist. According to 6sense's 2025 Buyer Experience Report, 94% of buyers now use AI during their buying process. They have already formed a point of view before they reach the site. Pipeline quality starts before the form submit, before the MQL is created, and before any human on the team is involved.

By the time volume hits the CRM, the quality problem is already baked in. More MQLs from a broken qualification model produce better-looking dashboards and identical win rates. The pipeline looks productive from the outside. The moment ad spend slows or outbound volume drops, it falls apart. This is the volume trap.

Before getting to the five fixes, it is worth being precise about what pipeline quality means in 2026, because most teams are measuring a proxy for it, not the thing itself.

Pipeline quality is a system output. It is deal fit multiplied by intent clarity multiplied by context richness at handoff. Not a subjective rep judgment. Not a feeling about the quarter. A measurable output of how the engagement motion is designed. When those three conditions are high, win rates improve and cycles shorten. When they are low, reps work hard on deals that were never going to close.

Most teams still treat pipeline quality as a judgment call that lives inside the rep. If the rep had better discovery skills, better follow-through, or more persistence, the pipeline would be better. The problem is upstream, at the point of engagement, before the rep ever touches the lead.

The metric that better reflects quality is not leads generated. It is qualified meetings as a percentage of conversations started. That shift forces the entire system to optimize for the outcome that matters: not contact capture, but buyer readiness.

The incentive structure makes this harder than it sounds. Marketing is measured on volume. Sales is measured on revenue. Those two things are not the same metric, and the gap between them is where pipeline quality leaks. Changing the metric only works if both functions are accountable to the same outcome.

A lead also needs a new definition. It is not just a contact. It is a contact plus qualification status, intent signals, and the exact questions the buyer asked. Anything less is a guess dressed up as a handoff.

An MQL gives a rep a name, an email address, a page view, and maybe a lead score.

An AQL gives a rep a context card: qualification criteria answered in conversation, intent signals logged, objections surfaced, and the exact questions the buyer asked, ready before the first call.

The difference is not incremental. One tells a rep a lead exists. The other tells a rep why the buyer showed up, what they need to know, and where the conversation left off.

Reps starting from an AQL do not start cold. First calls are categorically different, not because the rep is more skilled, but because the qualification work happened in the conversation before the handoff, not on the call itself.

Forms collect contact information. Agents collect qualification signals: budget range, timeline, use case, and technical requirements, in real time and in the buyer's own words.

By the time the lead reaches the CRM, the qualification work is done. No SDR follow-up is required to determine if the deal is real. Across Docket deployments, this produces a 36% conversation start rate versus 13% on legacy form flows.

The trap most teams fall into is simple: qualification logic that lives only in an SDR's head does not scale. It dies when someone leaves, takes a sick day, or gets a full calendar. When qualification is a system property rather than a rep skill, it becomes consistent, auditable, and less dependent on who is online.

Buyers do not just want information during evaluation. They want answers to hard, scenario-specific questions: security posture, integration depth, pricing edge cases, and competitive differentiators.

Legacy scripted chatbots deflect. The standard response is some version of “our team will reach out.” That is not an answer. An AI Marketing Agent reasons through evaluation questions from approved knowledge and keeps the buyer in the conversation.

Deflection is a pipeline quality killer. Every unanswered question is a reason to slow down or go somewhere else. The buyer does not fill out a form. They simply leave, carrying the evaluation with them.

Docket customers see a 20 to 40% lift in qualified meetings from the same traffic volume, not from more campaigns, but from fewer conversations that end in deflection.

When every vendor's AI gives roughly the same LLM-generated answer to evaluation questions, buyers cannot differentiate between vendors. Pipeline starts losing before the conversation ends. This is the AI flattening problem.

Docket's Sales Knowledge Lake™ grounds every agent answer in approved product knowledge: pricing, security documentation, technical specifications, and competitive claims. There is no open-ended inference. If the answer is not in the approved knowledge, the agent says so and routes the buyer to the right person on the team.

This is not just an accuracy issue. It is a pipeline quality issue. A misstatement on pricing or security in an AI-generated answer plants doubt that follows the deal through the entire cycle. The rep often never knows why it stalled.

At Demandbase, 93% of seller queries were automated through this governed knowledge foundation, grounded in approved knowledge from day one.

For a deeper look at how this works in practice, see Docket's piece on custom cognitive architecture.

Most handoffs are a contact record and a CRM task. The rep has no idea what the buyer actually asked, what stage of evaluation they are in, or what objections surfaced during the website visit. The first call starts from zero.

Docket's AI Marketing Agent routes based on territory, product line, and account ownership, and syncs the full conversation context to the CRM automatically. The rep arrives informed. Docket customers see approximately 12% higher win rates from cleaner qualification and better deal fit at entry.

A good handoff context card includes:

Most teams treat inconsistent qualification as a people problem and try to solve it with training, better playbooks, more coaching, or stricter criteria during pipeline review. Training improves the average temporarily, until someone leaves, a new product launches, or a new region comes online. Inconsistent qualification is a structural problem. It requires a structural fix.

When qualification logic lives in human judgment, it varies. Different reps apply different criteria. Different regions have different standards. Different products receive different rigor. The pipeline looks healthy. The forecast is a lie.

An AI Marketing Agent applies qualification criteria, whether MEDDIC, BANT, or custom, consistently across every inbound conversation, 24 hours a day, regardless of which rep is online or what the SDR queue looks like that afternoon. Humans are alerted when edge cases require judgment. The system does not go unsupervised. It is governed.

The result is simple: pipeline quality becomes a system property, not a rep skill. The forecast reflects consistently qualified opportunities, not a mix of rigorously and loosely qualified leads that all carry the same label.

Most revenue teams in 2026 are still measured on MQL volume. The risk is not just flat win rates. It is structural dependency: a pipeline that looks healthy only while ad spend is running and outbound volume is high. The moment either slows, the funnel empties.

The teams pulling ahead are not running harder on the same model. They are measuring AQL conversion rate, qualified meeting rate, and pipeline-to-close by conversation source.

The good news is that this does not require dismantling the existing stack. The change happens at the inbound engagement layer, in the gap between campaign traffic and sales-ready pipeline.

Deploy an AI Marketing Agent on the highest-intent page, run it alongside the existing MQL program for 30 days, and let the conversion data make the internal case.

If the pipeline is full and the win rate is flat, the fix is not more leads. Docket's AI Marketing Agent qualifies pipeline before it hits the sales team.