I spent several weeks evaluating five AI avatar providers for Docket. I tested idle behavior, measured concurrency limits, read the pricing footnotes, and tried to generate a custom replica of my own face. It failed at 66%.

This post documents what I found, the real gaps between what vendors pitch and what actually ships in production, and why we landed on HeyGen over everyone else.

If you're about to make the same decision, the framework might save you the weeks.

Why We're Building This

Docket is an AI Marketing Agent, voice-first, knowledge-grounded, built to engage and qualify inbound buyers in real time. It already supports voice and text. For most conversations, that works well. This is about adding the face.

The industry is moving. There is a growing camp that believes the avatar is not just a feature. It is the product. The entire bet is that replacing your website with a photorealistic face is the future of buyer engagement. No pages, no forms, no copy. Just an AI that looks like a person talking to your visitors.

I understand the logic. Eye contact and facial expression carry information that voice alone doesn't. There is a reason video calls feel more like presence than phone calls do.

But our approach is different. For Docket, the avatar is a toggle. It sits on top of voice and text, not underneath everything else. You can run voice plus text, voice only, or voice plus text plus a conversational AI avatar. The underlying STT, LLM, and TTS layers are identical regardless of mode. Avatar mode adds one participant to the room. That is the extent of the architectural change.

That is a different architectural bet from building avatar-first. It means the agent works without the avatar. The avatar makes it better but it does not make it work.

The avatar layer has no access to a separate knowledge base. It renders what the AI Marketing Agent says. The intelligence is in the agent: governed, grounded in approved product knowledge. The avatar is how that intelligence presents itself.

In practice, that means a buyer can land on your website, ask a real evaluation question about pricing or integrations, get an accurate answer drawn from your approved knowledge, and be routed to the right rep with a face delivering all of it. The voice and text version of this agent already drives the pipeline for our customers. The avatar is the next interface layer on top of a system that is already working. (add a specific metric or customer name here if approved for publication)

More importantly: we are not building this because we believe photorealistic face papers over weak intelligence. Those are two very different architectural philosophies. One starts with the face and builds outward. Ours starts with the knowledge layer (governed, accurate answers from approved product sources) and the avatar is one more way to deliver that. The sequence matters.

Why Real-Time Is a Different Problem

To understand why avatar quality is so hard to get right, it helps to understand how a voice agent pipeline actually works.

At a high level, a Docket conversation looks like this:

Visitor audio → STT (speech-to-text) → LLM → TTS (text-to-speech) → audio back to visitor

We use Deepgram for STT, GPT-4.1 as the LLM, and ElevenLabs for TTS. (We're moving to GPT-5.4 shortly. The avatar layer is model-agnostic, so the swap is one config change.) From the visitor's perspective, speech goes in and speech comes back out. Internally, it is a chain of conversions: audio to text, text through the model, text back to audio.

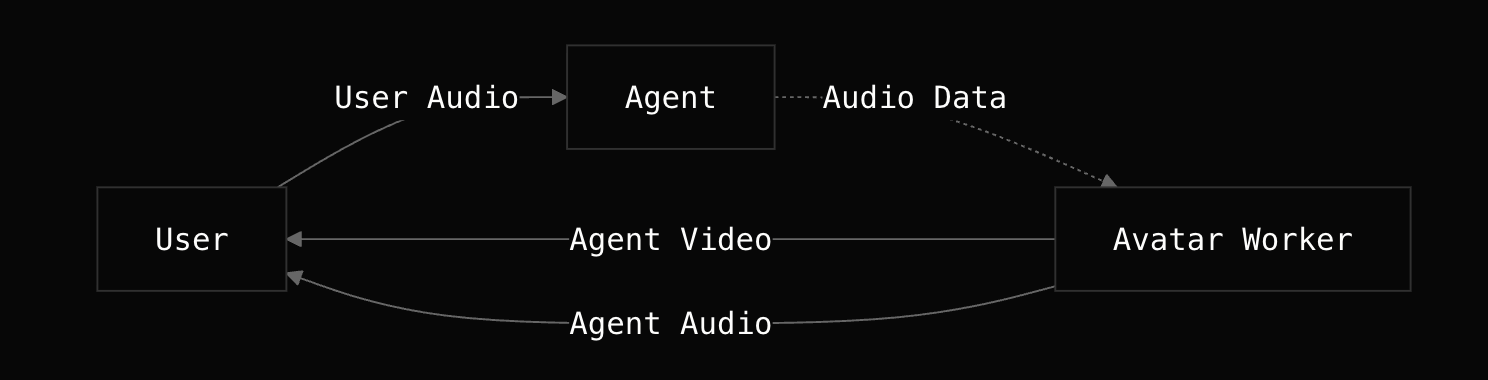

Adding an avatar inserts a fourth node into that chain: TTS audio output → avatar provider → synchronized video feed

The avatar provider receives the TTS audio stream in real time and must return a lip-synced video stream that stays synchronized with the audio. No buffering, no offline rendering. Every word the agent speaks needs a corresponding frame of video, generated and delivered with low enough latency that the mouth and the sound arrive together.

Real-time lip sync is the hardest constraint in the pipeline. Not the face, the timing. A marketing video created with modern generative AI models can be rendered in minutes and edited afterward. Here, the pipeline is live, and every node has a latency budget. The entire chain, from the LLM generating a response token to the visitor seeing a synchronized lip movement, needs to stay within a window tight enough that the conversation feels natural.

One part of this that doesn't get documented enough: interruption handling. Real conversations are not sequential: buyers cut in, change direction, and talk over the agent. We use Silero VAD, LiveKit's voice activity detection plugin, to detect when a user's turn is complete. The agent waits for a genuine pause before responding, not a fixed silence timer. Get this wrong and the agent either barges in too early or waits awkwardly too long. The avatar compounds any error here — a face that keeps moving while the visitor is mid-sentence reads as broken.

LiveKit, which we use for session orchestration, writes about this explicitly: latency, interruption handling, and lip-sync quality are fundamentally different engineering problems in a real-time conversational context versus a render pipeline.

The idle state is where most providers fail first, and it is the most honest signal of render quality. When no one is speaking, the avatar is not lip-syncing. It is just existing. The bad ones freeze, twitch, or fall into a repetitive loop: a circular eye movement, a mechanical head bob, a blinking pattern that feels algorithmic. The good ones breathe, micro-adjust, and sit in silence naturally.

This matters more than it sounds. Research from 2025 found that even subtle imperfections in idle behavior and eye movement reduce trust in AI avatars during professional interactions, not just comfort. For a marketing agent trying to earn buyer confidence during a technical evaluation, a twitching idle face is not a cosmetic issue. It undermines the entire thing.

How I Evaluated Providers

To understand why certain decisions were made, it helps to know how LiveKit's avatar model works at a high level. When you add an avatar to a LiveKit voice agent, you are adding a second participant to the room: an avatar worker. The agent session handles STT, LLM, and TTS. Instead of publishing audio directly, it routes output to the avatar worker, which renders and publishes synchronized audio and video back to the room. Full technical detail is in LiveKit's avatar docs.

This is why plugin availability was a hard gate. LiveKit's plugin model standardizes the avatar worker interface. With a plugin, integration is roughly five lines of code. Without one, you are building custom WebRTC track handling, audio routing, and session management from scratch. Weeks of work before you can even test quality.

At the time of my evaluation, LiveKit had plugins for twelve providers. I narrowed to five based on maturity, production evidence, and relevance to a B2B sales agent use case.

From there, I evaluated on four additional criteria:

Human-likeness

How close to real without triggering the valley. Tested stock avatars directly and, where possible, attempted custom replica generation.

Idle behavior quality

What the avatar does when nobody is talking. A two-second speech clip can look good from any provider. Fifteen seconds of silence is the real test.

Avatar concurrency limits

The number most providers bury in the enterprise tier footnotes. The question I kept asking: not "what's your quality like," but "how many buyers can actually see this avatar at the same time, on your base plan, in production?" If you are capped at five concurrent sessions and ten conversations are happening simultaneously, five buyers get no avatar. Session does not start. Face does not appear. This is a product reliability consideration, not a pricing one.

Pricing and plan scalability

Evaluated last, because quality and reliability have to come first.

The Five Providers and What I Found

Here is what each one looked like in practice.

Tavus

Tavus was among the first to ship a LiveKit avatar plugin. That production time counts. They have had more edge cases surface and resolve than newer entrants.

Their custom avatar capture is genuinely differentiated. The process requires approximately two minutes of footage across three recorded segments: listening, talking, and idling. The system builds the avatar from multi-angle facial data rather than synthesizing motion from a static image, the output quality reflects that. Standard processing takes three to five days. Enterprise takes twenty-four hours.

I tested this directly. My custom avatar generation got to approximately 66% and errored out. No explanation, no retry prompt. Multiple retries over roughly an hour eventually produced a working result. Not a broken pipeline, but not a smooth one either. If you are planning custom avatars on a deadline, budget time for this.

Growth plan: $395/month, up to 15 concurrent sessions. Enterprise: custom pricing.

One non-obvious finding: quality varies at the individual avatar level, not just the provider level. I tested multiple stock avatars and found meaningful differences between them. One had a persistent blinking pattern that read as nervous energy — not broken, just captured that way. Evaluate the specific options you intend to deploy, not just the best-case demos the provider shows you.

Verdict: Strong contender. Runner-up.

Anam

Anam's LiveKit plugin shipped in August 2025. The integration works. Quality and idle behavior are acceptable.

The constraint is concurrency. At approximately $299/month, the plan supports five concurrent avatar sessions. (need verification from internal team) In a production deployment with any meaningful traffic, five concurrent sessions is not a number you can build around.

Verdict: Technically capable. Ruled out on concurrency-to-price ratio.

Simli

Simli has a LiveKit plugin and entered the real-time avatar market around mid-2025.

The idle behavior artifacts were the most pronounced of any provider I tested — circular eye movement, mechanical head rotation with no natural variation. The rendering engine did not handle the transition between speaking and idle states cleanly. The face never felt at rest.

Verdict: Ruled out on quality.

Beyond Presence

Beyond Presence uses a static image as input and synthesizes lip-synced motion from it. The approach has a hard ceiling. What you get out is constrained by what a single image can encode — no depth information, no motion reference, no natural variation across the face over time.

The output reads as a mascot. For a use case representing a human-like AI agent in a B2B evaluation conversation, that ceiling is the disqualifier.

Verdict: Ruled out on quality.

HeyGen LiveAvatar

HeyGen has been building in the avatar space longer than any other provider in this evaluation. Their LiveAvatar product launched in October 2025 and is already in production at companies including Coursera, HP, and Bosch.

When I started the evaluation, HeyGen was on my watch list rather than the active shortlist — the LiveKit plugin for LiveAvatar had not yet shipped. By the time I completed my evaluation of the other four providers, the plugin had become available as part of livekit-agents v1.4. (exact release date of the LiveAvatar plugin in livekit-agents: need verification before publishing) At that point, the decision became straightforward.

Quality

The idle behavior is the most natural of any provider I tested. The micro-movements, blink patterns, and transitions between speaking and silence are closer to how a person actually sits in a conversation. Not perfect — but noticeably ahead of the other options with available plugins.

One honest limitation worth flagging: we currently have no control over the avatar's emotional expression. The avatar does not read the emotional tone of the TTS audio and mirror it — warmth, urgency, hesitation. What expression you get depends entirely on how the provider trained the underlying avatar model. For now, the face delivers the words accurately. The emotional register is flat.

The concurrency math

HeyGen LiveAvatar's Essential plan supports 20 concurrent sessions. Tavus's Growth plan at $395/month gives you 15. That gap, i.e., 5 additional concurrent sessions at a comparable price point — is the number that moved the decision.

Pricing model

HeyGen uses a credit-based model rather than a flat subscription. $100 buys 1,000 credits. In Lite Mode where you bring your own LLM and TTS provider, which is how our architecture works, each minute of avatar session costs 1 credit. Approximately 1,000 minutes of avatar time per $100. More granular to model than a flat subscription, but workable.

Session cap

The Essential plan limits individual sessions to 20 minutes. We are designing agent conversations to cap at 10–15 minutes: qualify, educate, route. That is not a workaround. A well-designed AI marketing agent should qualify, answer, and route within fifteen minutes. If a conversation needs longer, it needs a human, not a longer avatar session. We designed that ceiling deliberately.

On failover: if the selected avatar provider is unavailable when a session starts, HeyGen, Tavus, or otherwise, the avatar session does not initialize and the conversation falls back to voice and text mode automatically. The buyer gets a full conversation. No avatar, no error state, no broken experience. That fallback was a requirement before we shipped.

Is HeyGen the best avatar provider that will ever exist? Probably not. But it is the best option available right now that clears the quality bar, has a working LiveKit plugin, and supports the concurrency a production deployment needs. We will keep evaluating. The space is moving fast.

Verdict: Selected.

The Assumption Worth Questioning

The entire avatar race in B2B GTM is built on one premise: buyers trust human faces, so make the AI look as human as possible. More realistic. More expressive. More indistinguishable.

I kept pushing back on that premise throughout this project.

Some companies have bet their entire product on it. The avatar is not a feature — it is the strategy. The problem is that a face is an interface, not intelligence. When a buyer asks a real evaluation question likehow does pricing scale, what does the SOC 2 cover, does this integrate with a specific stack; a photorealistic face backed by general-purpose AI is going to fail them. Elegantly, maybe. But fail them.

Custom avatar production,the kind that produces a truly photorealistic result, takes weeks to months depending on the provider. That is a significant operational commitment before the first buyer conversation happens.

A 2025 study found that 48% of consumers actually prefer AI interfaces that are clearly non-human — specifically to avoid the discomfort of something that almost-but-not-quite crosses the uncanny line. (source: Vidboard AI, 2025) That number will shift as the technology matures. But it suggests the assumption that buyers need to be deceived into comfort is not as universal as the avatar-first camp believes.

A face is an interface. The intelligence behind it is what earns trust. We built in that order.

Where We Are

Docket now supports voice, text, and avatar. You can toggle between modes. The avatar layer runs on HeyGen LiveAvatar and Tavus via LiveKit, with the same voice agent underneath. We shipped with stock avatars. Custom replica support is next.

What I genuinely do not know yet is whether B2B buyers respond to avatars the way the industry assumes they will. The research pointed us to HeyGen. The architecture is live. The data comes next.

If you're building in this space and want to compare notes, I'm reachable at https://www.linkedin.com/in/arjun-a-i/

If you want to see the Docket AI Marketing Agent, including the avatar, in action, https://www.docket.io/

ABOUT THE AUTHOR:

Arjun is a software engineer at Docket, working on the voice and avatar stack that powers Docket's AI Marketing Agent. He joined Docket a year ago after his team merged into the company and has been building across the real-time conversation infrastructure since. Before Docket, he spent his college years working on computer vision and competing in hackathons, which is, it turns out, decent preparation for evaluating avatar rendering pipelines. 😀